The term “virtual reality” used to conjure up images of gamer geeks in headgear and gloves, and maybe for some it still does. But all of that is changing. Big studios and companies that are backing VR, including Fox, Sony, Google, Oculus, Samsung and HTC, to name a few, are quickly transitioning from trade show demos to consumer commodities. 2016 will see the release of several serious consumer VR options, including the Oculus Rift, which feature integrated VR audio.

No matter the creative team, all future players in the VR field need one thing to succeed—quality content. Without that, VR will be just another passing tech fad. But there is real potential in this new medium, for both content creators and users. It offers an experience that’s unlike anything found in traditional entertainment (yes, 3D films, we’re looking at you, too). It’s a truly immersive experience.

So how, exactly, do you go about creating content for a completely interactive, 360-degree, three-dimensional environment? Sharing some hard-won insight is sound designer/composer Dražen Bošnjak, owner of the award-winning music and sound design studio Q Department in Tribeca, N.Y. He’s worked on more than 10 high-profile VR projects, including The Martian VR Experience produced by Fox Innovation Lab, RSA Films, and The Virtual Reality Company (VRC), and directed by two-time Oscar winner Robert Stromberg.

“The Martian VR was by far the most complex in terms of technology and experience,” says Bošnjak. (The Martian VR Experience, coming to VR platforms later this year, has already generated quite a buzz, most recently at the Sundance Film Festival.) “It was great to see people come out of the VR experience. They were crying and sweating because they feel like they barely survived this zero-gravity float to the Hermes. It’s really amazing to see people’s reactions. I savor every moment of watching people go through it.”

To achieve that bodily fluid-inducing reaction, Fox Innovation Lab and VRC needed to hybridize the Hollywood feature film with an interactive game to produce a 20-minute cinematic VR adventure where a player feels like Mark Watney on Mars, trying to reach the rescue rendezvous with the spaceship Hermes. It’s all about believability; using visuals and sound to trick the player’s mind into thinking the experience is real.

On the sound side, it was up to Bošnjak to re-create The Martian’s Hollywood-caliber sound design in a way that would work for an interactive experience. As is often the case, nearly all the elements created specifically for the film were not useful as game assets. “The perspective is different for film and games,” explains Bošnjak. “In a film, you watch the action unfold and it happens the same way every time. In a game, you control the action, and it’s different every time you play, therefore numerous different assets are needed to account for the unpredictability of the action. Adding interactivity makes the sound asset list grow exponentially.”

To craft the sound for The Martian VR Experience, Bošnjak would go inside the VR project to get an idea of how he wanted to approach a particular moment. Then, in his studio, he’d use traditional tools and best post sound practices to create the sounds, always keeping in mind that what he was creating would end up as interactive, multidirectional, biphonic audio.

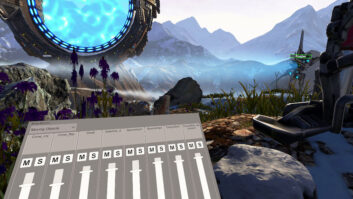

The user loads solar panels under the duress of an impending dust storm, in ‘The Martian VR Experience.’ Credit: Images Courtesy of 20th Century Fox, Fox Innovation Lab

“The goal is to bring together the videogame aspect of VR with the aesthetic of traditional audio engineering,” he says. “I wanted the sound for The Martian VR Experience to have real analog and vintage digital gear, like Neve and API EQs, Thermionic’s Culture Vulture distortion, the Eventide H3K Harmonizer, the old blue MXR Flanger, and the Lexicon 224XL. The real gear, not the plug-ins. I was using really old-school processing on the computer/robotic voices to bring that sweetness and real analog presence inside these environments. It fits hand in glove. It sounds luscious. We are not using any data compression on our sounds. There is no additional processing and filtering during playback or render. The way we created our mixes is what you hear on playback.”

Bošnjak also created several music tracks to go along with a selection of original compositions featured in The Martian. To make the film music interactive, it was carefully edited with supervision from director Stromberg and the VRC team.

“There’s a whole different approach to creating music when it’s for interactive media versus when you’re cutting music for a scene that will always play the same way every time,” Bosnjak says. “That was extremely challenging since we were using a beta customized version of Unreal Engine, based on UE 4.9—with no support for middleware or third-party plug-ins. We had to come up with our own mathematics that would drive the interactivity of the sound.”

For example, during the climactic zero-gravity float toward the Hermes, Bošnjak says, they broke that down into four sections and ran their own clock to create an imaginary tempo grid and timed the user’s actions. Then they could quantize the triggering of the interactive music parts to the imaginary tempo grid and use that data to seamlessly play different parts of the music.

“We wanted it to seem like the music just happens to hit all the important moments, but actually it was designed that way,” Bošnjak says. “It was a really thrilling moment when we put the music inside of Unreal Engine and I could experience floating around above the capsule in zero gravity and everything was working so seamlessly. It was a rewarding experience and very fun to do.”

New Medium, New Tools

By the time Bošnjak started work on The Martian VR Experience he had already established sound tech company Mach 1. Working with Mach 1 Technical Director Dylan Marcus and a team, they designed a set of proprietary sound tools that allow Bošnjak to more effectively create and audition multidirectional, biphonic sounds in a virtual reality space.

“The old tools are completely incompatible,” Bosnjak explains. “You can sit at the mixing board and plug your headphones in, but how do you simulate yourself looking around? How do you design sound in a way that will react to the direction of your viewing? That was really how hardcore our problem was. We developed tools that let us create multidirectional, multidimensional sound, and they allow us to observe the sound from within that perspective.”

One issue they were quick to tackle was how to audition sound in real time in VR without having to first implement it into a game engine. Mach 1 devised a VR playback system using a pair of headphones equipped with head tracking. This allows Bošnjak to hear how his sound changes as he moves his head, a major component of VR sound.

“That is where it can get tricky for sound designers, composers and engineers,” he says. “Every time we needed to observe our work, we had to have the sound integrated back into the VR project. That means a team of tech people would have to be waiting around to do these laybacks. With our tool, you can hear it back immediately in real time. You just put these headphones on and you can listen around inside the VR project.”

After working with this tech for so long, Bošnjak says he sometimes feels underwhelmed with traditional headphones and playback formats where the sound environment doesn’t change when he turns his head. “It reminds me of small children who grew up with iPads and smartphones. They get irritated when screens are not interactive. I’m developing this addiction to multidirectional, interactive sound.”

In conjunction with head tracking, another important consideration for VR sound is spatialization—producing the sound in a way to give the illusion that it is coming from a specific position in a three-dimensional space. Sound waves react to objects in the environment, bouncing off walls, floors, ceilings and bodies. In VR, sound should do the same. While there are some plug-in options available for tackling spatialization, Bošnjak and his team had to develop their own method years ago when no such tools existed. His solution was to create multidirectional recordings and multiple mixes, each from a different fixed perspective. When loaded into the game engine, the proper mix would play for any given perspective. Transitions happen seamlessly between mixes.

“There was no running our mixes through poor-sounding DSP filtering,” he recalls. “A lot of people are running their mixes through HRTF (head-related transfer function) filters, which are supposed to simulate the shape of your ear and how sounds react to your head and body, but there are so many problems with it. There is such a misconception in how it is being used. Unfortunately, all it is doing is degrading the original sound and often adding latency to the directional information if it is rendered during playback. Once you stop degrading the sound, you realize, ‘Wow, we really need audio engineers here to capture, create, and mix quality audio.’”

Traditional Techniques

Bošnjak explains that because VR is more immersive than 2D games, it’s not as forgiving for lapses in sound standards. “We need all of the audio talent we can find to help us make the sound in VR as beautiful as it would be for a feature film,” he says. “I want that artistry in VR. We can’t leave the sound solely up to the technology side of it, at least not yet. We need the nearly century’s worth of experience in producing great sound and soundtracks.”

The problem right now, Bošnjak points out, is not having a suitable workflow that allows engineers and professionals across the audio industries, from film and music, to get involved.

“We don’t have the tools that will allow them to get their hands inside the VR world,” he laments. “But all the skill sets they have for making something sound great, we need those in the VR world.”

While analog gear remains relevant, this new medium requires new tools, new methods and new workflows. In his years of creating sound for VR, Bošnjak has been identifying what’s missing from his VR workflow that he was able to do before.

“We made prototypes that I use when I’m doing my work,” he says. “I want to make hundreds and thousands of copies of these tools we’re creating and put them in the hands of the talented audio engineers so they can create for the VR environment, too. The best thing would be for the VR tech community to embrace the traditional audio engineering community, and together we can create a first-class experience.”