A perennial controversy in the music production world is whether or not there’s something inherently wrong with using presets. Some people take the “presets are for wimps” side of the argument, while others have no problem whatsoever with them.

To me, it’s a little more nuanced. Particularly when it comes to signal processors, the problem with presets is that they’re generic and can’t take into account the specifics of the track you’re processing, nor the context of the mix that track is part of.

But it also depends on the circumstances. For example, if you’re looking to process your drums with a pumping compression effect, the presets you’ll find in your 1176 emulation plug-in will likely do fine. Conversely, if you’re trying to correct or polish your electric guitar track with EQ, a generic preset will not come close to what you can dial in because you can hear the track, whereas the preset’s author created the setting based on a generic average.

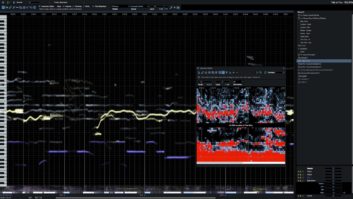

Of late, a new wrinkle, or I should say, a new technology, has appeared that changes the calculus about whether or not to do your own parameter setting. I’m talking about processor plug-ins developed with AI capabilities—machine-learning, to be specific—giving them the ability to analyze audio and come up with custom settings.

You’ll find AI embedded in everything from compressors to EQs, and even reverbs. Sonible smart:reverb and iZotope Neoverb are two recent examples of the latter.

In the case of Neoverb and other iZotope products like the mastering software Ozone or the mixing plug-in Neutron, the software also asks you some basic questions that provide it with context about your project.

And speaking of context, the algorithms in these products compare your audio to a machine-learned database developed from the analysis of massive amounts of music. There’s no way you could listen to millions of reference recordings before deciding how to set your compressor, but your AI-assisted plug-in can.

I have found the processors that include AI to be pretty handy. Their suggestions are usually useful and get you in the ballpark. Most developers make it pretty clear that the results their processors come up with are not attempting to be “final settings,” just good starting points.

Read more Mix Blog Studio: Dispatch From the AES Showcase.

Even if you have several AI-based processors at your disposal, it’s still important to at least be capable of coming up with settings manually. Understanding the tools you’re using is crucial for any engineer or producer.

While on the subject of AI and music, allow me to segue to a still-in-development product called Lumos from Tonic Audio Labs. It’s a hardware device with processing inside that utilizes machine learning and is designed as a songwriting tool.

The company touts the battery-powered unit as “your new songwriting collaborator.” According to the Tonic Audio Labs website, Lumos is small enough to carry around with you (I couldn’t find any dimensions on the website, however), so it’s there when inspiration strikes.

Not only will Lumos be able to record your song ideas, but it will also offer suggestions for chords, arrangements, rhythms and even lyrics. The latter is what particularly caught my eye; I could use help in that area.

Tonic Audio Labs says the critical component in Lumos is a “Tensor Processing Unit, or TPU—a high-speed machine-learning ASIC chip developed by Google that makes hardware devices incredibly smart.”

In case you were wondering, machine-learning is part of a type of artificial intelligence called Narrow AI, which focuses on a relatively limited task. It’s not part of the category called General AI, which is the kind that’s designed to “think.” So no need to worry that your smart compressor plug-in will eventually take over the world.