What is the point of restoring a historical music recording? Is it to re-create the recording session so we can hear the original instruments, the room in which they were recorded and everything that their sound passed through, good and bad, in the signal chain? Or is it simply to listen to the music?

Amazing things are now possible in audio restoration, and we have techniques to remove noise, room resonances, frequency anomalies and distortion that no one could have dreamed of 25 years ago. But what if we could take away all of the circumstances and equipment surrounding the music and have the actual performers — ignoring the fact that they’ve been dead for decades — play in front of us on real musical instruments?

That’s the goal of pianist-turned-computer-scientist-turned-musical-researcher John Q. Walker and his crew at Zenph Studios. And at one level, they seem to have accomplished that goal. They’ve also managed to achieve, in a limited but important way, one of computer music’s Holiest of Grails: polyphonic pitch extraction. But their process is not going to show up as a plug-in any time soon.

Simply, Walker’s group of scientists, programmers and musicians is analyzing piano recordings of many different vintages, translating them into MIDI files and reproducing them on a high-tech player piano: a Yamaha Disklavier Pro concert grand. But in the process, they are redefining audio analysis, extending the limits of what a player piano can do and even expanding the meaning of MIDI.

Their work has been getting a lot of ink in the trade and popular press, but while the coverage has concentrated on the “miraculous” aspects of the process — “Art Tatum comes alive in your living room!” — the nuts and bolts, and the truly innovative engineering processes he’s put together, have been largely passed over. Much of the reason for this is that the technology is very specialized and very complicated. And some of it is due to “hand-waving” on the part of Walker: that academic practice of diverting the audience’s attention when you don’t want to — or can’t — describe a process or solve an equation. But that’s understandable as a lot of his concepts are patent-pending.

In fact, Walker is a talkative and truly amiable fellow as I discovered when I sat down with him and a Disklavier Pro grand piano in a relatively quiet downstairs demo room at the recent AES conference. Seeing as how I know MIDI, I know something about piano repertoire and I happen to know a lot about Disklaviers, I was his perfect audience. I learned a lot about what his team has been doing, and I was impressed.

Walker grew up in Texas and Illinois, and started playing the piano at age five. He got a college degree in piano performance, and one of his teachers was Ruth Slenczynska, who had been one of only two students of the legendary composer/pianist Sergey Rachmaninov. But he also got a math degree, and then went on to a Ph.D. in computer science and went to work for IBM. Some 17 years later, he founded a company that made tools for measuring network performance. When that company was sold to a larger firm, he had the freedom to go back and work on music again.

But he wasn’t interested in performing; rather, he wanted to apply his computer skills to historical performances, and Zenph was born. As for the name, “It’s the German word for mustard,” he explains, “although they spell it ‘senf.’ Our previous company spent a lot of time trying to find a name and went through 400 or 500 of them. Zenph was a leftover, and since we already had the domain name, we just went with it.”

He summarizes his work with a one-sentence question: What would it take to hear Rachmaninov, who died in 1943, play again? Part of the pianist’s legacy is preserved in recordings that are plagued with all of the problems of early records. There are also performances that are preserved on player piano rolls, which the pianist made using one of the “reproducing pianos” — player pianos that let a pianist record music in real time, as opposed to making rolls with manual punches — that were popular in the early part of the 20th century. But player piano rolls, even reproducing ones, says Walker, “don’t have enough bits. If you have three holes in a row that are the same note, they’re played identically. A real pianist never plays two notes the same way.” Early reproducing pianos also lacked subtlety when it came to dynamics; they specified a limited number of dynamic levels, and having simultaneous notes sounding at different dynamics was impossible.

We now have player pianos that can be controlled with far more accuracy and subtlety such as Yamaha’s Disklaviers, which use an internal computer and a complex array of high-precision servos, sensors and solenoids to operate the keys, hammers, dampers and pedals. But most of the Disklavier line still isn’t up to the task that Walker had in mind. “We had to wait until the hardware got good enough,” he says. “The answer was the Disklavier Pro. It has 10 times the precision of the other Disklavier models. Every note-on has 10 velocity bits, not seven. Every note-off has 10 bits, too, and that’s important for articulation sense, which is how you pull your finger off at the end.”

The Disklaviers are MIDI instruments, but even with its sub-millisecond accuracy and 127 velocity and volume levels, MIDI can’t handle everything the Disklavier is capable of. So Disklaviers use something Yamaha calls “high-resolution MIDI,” which Walker figured out uses non-registered parameter numbers — which are undefined MIDI controller commands — to carry the extra bits for the note commands. “An ordinary sequencer couldn’t keep up with this,” he says, “because it would have no idea how to deal with these controllers.”

Because of all the extra data, the data stream becomes too heavy for an ordinary MIDI cable to carry without encountering timing problems. So Walker doesn’t bother: “We communicate by loading files onto the piano’s hard drive and their computer is connected directly to the piano mechanism. As far as we’ve been able to see, there is no limitation on how fast the computer can send data.”

The other side of the equation is extracting the performance data from the recordings. “We read all the papers about this from the past 20 years,” says Walker, “and we realized the ways to get at this problem were not only signal processing paths. We have written a lot of software, but we viewed this as an engineering process. What are all the things we have to do to get 100-percent accuracy? Some of them need human hands, human assistance.

“In the early steps of the analysis, we used musicians to determine the right dynamic range. Early recordings have narrow dynamic ranges, so we have a professional pianist decide what the range would be if it were played now. We don’t quite know a way to do that automatically yet. Then we have DSP software go through the entire recording and look for everything that might be notes. We have models of what piano notes look like, but it’s a very liberal model; it also finds reflections off the wall and harmonics. But everything that isn’t a solo piano, like recording noises and mechanical squeaks, vanishes. Then we do more passes to fit three-dimensional models we have of piano notes against these, and that distinguishes real notes from harmonics.

“We do a pedaling pass. If all the dampers are up, the harmonics and the total picture of the sound look totally different. But we don’t worry about half-pedaling. The only thing that counts is when the hairs of the felt touch the string.

“I look forward to the day when the process is all automatic, but I don’t expect it very soon, especially because people know the recordings. So we do the best we can with the computer and then we do touch-ups with really good ears. We never consult with a score before we start — that was an early design point. People don’t play what’s in the score — they miss notes, they add extra notes. [French pianist Alfred] Cortot adds an extra 10 percent more notes. And when you’re working with jazz improvisations, there are no scores. The historical evidence is the recording. If a note is there, it will affect the overtones and the mappings. But if we can’t detect it, then we can’t detect it.

“We do use a score at one point: When we’re 98 percent done, we have the piano play and we sit and listen, and then it’s nice to have the score to mark things that aren’t accurate — something we can circle with a red mark and say, ‘That note is not right. Why?’ You should see our scores — they have the weirdest markings you can imagine. But even if we don’t have scores, since we’re basically in MIDI, we can dump it into Finale or Sibelius and print it out and look at it that way.”

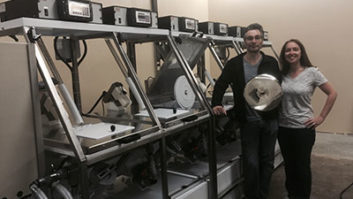

Testing the output of the process is something else that Zenph needed to develop. The group has a tightly temperature- and humidity-controlled room for their pianos, and the instruments are maintained to a degree most concert halls would envy. “Our first tendency was to sit in the room with the piano, and when we heard something we didn’t like, we’d go, ‘Oh that’s not quite right,’” Walker says. “But we learned that was wrong. We’re making the piece sound like how we want it to sound, but there’s no historical evidence that supports that.

“Instead, we make a new mono recording, one mic in the room, as close as we can to the original recording, and then we play those two recordings at the same time, one in each channel. You can’t match the .WAV files, but you can analyze the timings. The thing that tells your brain ‘right or wrong’ more than anything else is the timing of what’s played. So you sit there with headphones, and when the two ears match, that’s the best way to analyze. Wrong notes can be a lot of things: The touch isn’t right or the note is the wrong duration. But if it’s right when you listen this way, it must be right, whether you like it or not.

“When you just listen to the Disklavier Pro by itself play Glenn Gould’s Goldberg Variations, you wonder whether he played it that way because it sounds a little ragged. But when you compare the two recordings, they match. That was a real revelation. I didn’t sleep well the night we figured that out — it was such an unexpected step.”

As anyone who heard Zenph’s renditions at AES of Gould, Cortot and Tatum (from a recording made by a fan on a portable reel-to-reel deck at a cocktail party) will agree, the re-creations are uncanny. The timings and variations in the keystrokes are so subtle, it’s easy to imagine the pianist is in the room, his fingers pushing the keys down. Still, Walker was less than thrilled with the conditions in New York, which illustrate one of the limitations of this technology. “If you play, say, a [Vladimir] Horowitz file on an instrument that’s not calibrated or tuned, it won’t sound authentic,” he says. “With all the rain we had during the show, the piano was having a very hard time. We almost closed the demo room the last day of the show because four days of hard usage in that weather had taken a toll on the piano.”

Convincing others of his work’s value is something Walker realizes is not going to be simple. “We have to establish an extremely high threshold,” he says. “People know what the original performances sound like, and if our re-recordings aren’t identical, they will know that’s not how Horowitz played.”

And once all the technical hurdles are overcome, there’s still the question, what’s the point? Walker is looking at a few areas in which he thinks his process can have an impact. “We’re working right now with the record companies,” he says. “We take their old recordings, do the conversion and hand them back as high-res MIDI files. Then they can make a new recording.

“We’re new and we don’t want to mess up,” he continues, “so we’re starting with the copyright holders. No artist or studio contracts anticipated this technology, so we have to be careful. We’re in the midst of putting together our first contract right now,” which will involve recordings of a famous pianist from times past whose name Walker won’t reveal.

He also sees its use in analyzing jazz performances. Every performance of a tune, even by the same artist, can be completely different from every other. Jazz transcriptions are usually done laboriously — often long after the fact — by a fan, and then you are left with a written record of only a single performance. “We don’t have comparative scores of Art Tatum playing a song five different times,” he says, “but now we could. A colleague of ours at Duke University says that this will make jazz a much more respected academic field because we’ll have scores to compare, to mark, to study, as opposed to just listening to recordings.”

Another use is for musicians who don’t perform well in a studio setting. Says Walker, “He can play it at home, where he’s more comfortable, on his upright or whatever, and then the producer can bring it in and redo it in the studio.

“The key point,” he summarizes, “is that now the performance can be separated from the medium,” which, of course, has been the point of MIDI all along. But today, Walker is taking that idea to a new level. “We’ve all adopted word processing; the text can be separate from the book. This is the same story. So forever on in history, as the medium improves, you can get better audio recordings. It’s the biggest perception hurdle that we have with people: If a recording was made in 1930, then does the way it sound have to be from 1930? That’s not true anymore.”

Walker also sees the technology going beyond pianos, although that would present a whole new set of challenges. “Things that are plucked or struck are easier to do and distinguish than things that are bowed or blowed,” he says with a laugh. “We could see doing a jazz trio, for example, but we don’t yet have a high-resolution bass. So we need to be commissioning instruments,. We may be able to do it with robotics, but probably the virtual instruments will get there first.”

And how about transcribing a symphony orchestra? “Orchestral stuff will happen in our lifetimes. It’s a matter of computing power and a whole lot of equations. Our ears can sort out all that information, so we will eventually be able to do it with computers. People have been telling us it can’t be done, but we’re engineers, we can do this.”

Paul Lehrman never plays anything the same way twice, even if he wants to.