Last month, I looked at the subjects of chips, clocks, word lengthand DAW power. This month, I’m examining an impending tipping point indesktop computers: the introduction of several new consumer processorsthat will change the way you do your work.

Let’s step back a bit so that we can get our bearings. If youremember from last month, I looked at the inevitable progression from 4to 8 and then 16 bits, on up to the current crop of 32-bit CentralProcessing Units, the heart of any computer-based product. All thewhile, Intel was hyping what the folks at Tom’s Hardware refer to as“its self-perpetuated myth that processor performance is based onclock speed alone.” Now, with clock speeds reaching the limits ofcurrent technology, a feature that mainframes and scientificworkstations have long enjoyed has started to make an impact on the Macand Windows desktop: Processors that crunch true 64 data words are nowbreaking out of the chip foundry and onto your desktop.

To keep the heavy-duty, “enterprise-class” IT customershappy — and to provide something for mere mortals to lust after— the x86 chip vendors began the migration from 32-bit to 64-bitprocessors several years ago. At present, high-end Windows users are inthe middle of a marketing tug-of-war between Intel/HP and AdvancedMicro Devices (AMD), and I’d place my money on AMD. Here’s why: Inteland Hewlett Packard’s new 64-bit Itanium processor family, the Mercedand McKinley chips, and their Intel IA-64 architecture are designed asa clean break from the past. Legacy “x86” code written forthe 8, 16 and 32-bit range of past processors runs in emulation on anItanium, making overall performance for legacy software relativelypoor; “relative” translates into slower than the currentrange of 32-bit CPUs. According to eWeek’s technology editor, PeterCoffee, “Intel is betting that on-chip instruction schedulinghardware, which emerged on x86 chips in the late 1990s to inject newlife into 1980s-style code, is nearing its limit. With the Itanium,Intel proposes to examine programs when they are compiled into theirexecutable form and encode concurrent operations ahead of time. Intelcalls this approach EPIC — Explicitly Parallel InstructionComputing — and it is the genuine difference between the Itaniumand AMD’s x86-64.” Trouble is, EPIC is hobbled with weak backwardcompatibility for 32-bit code, making it a slowpoke in that regard.

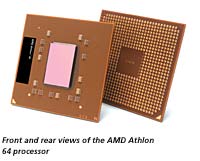

Meanwhile, AMD has seen fit to build legacy support, or backwardcompatibility, into its AMD64 technology, extending the Intel x86instruction set to handle 64-bit memory addresses and integer data,while providing a continuous upgrade path as applications are rewrittenor recompiled for the new capabilities of 64-bit chips. Dirk Meyer,senior VP at AMD, stated that the company “designed its AMDOpteron and upcoming [it was due out last month] AMD Athlon 64processors to deliver quick and measurable returns on investment withlow total costs of development and ownership; protect investments inthe existing 32-bit computing infrastructure and limit the costs oftransition disruption by transparently mixing 32-bit and 64-bitapplications on the same platform; and simplify migration paths andstrategies, allowing customers to choose when and how to transition to64-bit computing.” In a word: value. Right on, sez I!

This nod to customers’ real-world needs is something that I, forone, appreciate. I like the fact that, when possible, new and improveddoesn’t necessitate heaving out your existing stuff. As I mentionedlast time, the PowerPC Alliance made the same sensible choice when itbuilt 64-bit compatibility into its family of processors. Alas, the PPCAlliance dissolved in 1998 when Motorola assumed control of the PowerPCchip-design center in Austin, Texas. IBM continued to develop PPC chipsfor its own uses, and that effort has resulted in the latest member ofthe POWER family: the 970. Announced at last October’s MicroprocessorForum, the fifth-generation “G5” is, like the Itanium andOpteron, a true 64-bit machine, with support for 64-bit integerarithmetic vs. 32-bit for the G4, two double-precision floating-pointunits (FPUs) vs. one for the G4, as well as an AltiVec 128 data-bitvector processor. The G5 has, according to Apple’s developer Website, a“massive out-of-order execution engine, able to keep more than200 instructions in flight versus 16 for the G4.”

A much longer execution pipeline, up to 23 stages vs. seven for theG4, means that bogus branch predictions are more costly because of thedeeper pipelines. Address prediction — the whole PPC vs. Inteldebate, in a way — boils down to prediction and how designersaugur upcoming processing requests. Here’s why: CPUs are designed toexecute or process instructions in a predictable order. Think of amodern factory, with parallel production lines all buildingsubassemblies that are merged into a finished commodity. The output ofone assembly line feeds the input of another. Once all of thesubassembly lines are filled, an efficient manufacturing engine iscreated. In the world of CPU design, the assembly lines are calledpipelines, and once all parallel pipelines are filled, an efficientdata-processing engine chugs along. By the way, Intel’s EPIC is thatcompany’s answer to efficient parallel execution: Keep the pipelinesfull with nary a bubble in sight.

In a factory, if a part is missing from one assembly, then it holdsup all other lines that are dependent on the output of the suspendedline. In a CPU, if the correct datum isn’t available for processing inany pipeline, then it causes a discontinuity in the efficient use ofthe pipeline’s program-execution capabilities. That discontinuity, or“bubble,” happens whenever the task-scheduling managerscrews up in predicting what “part,” or piece of data, isneeded at the input to the pipeline. It’s as if the purchasing managerin a factory didn’t order a crucial widget to build a subassembly. Thelack of that widget shuts down the whole factory.

Sixty-four bits. Now what, you may ask, does that buy you? Well, howabout the ability to address more than 4 GB of RAM and practicallymanage more than 2 GB? Actually, 1 million terabytes. Far-fetched, yousay? Not really, when you consider that, nowadays, 1 GB of DDR PC3200RAM will cost you only $190, and many applications will happily use asmuch RAM as they can steal. With virtual memory, more RAM means lessdisk swapping, which results in significantly better overallperformance.

Another benefit is that these new 64-bit puppies are designedexplicitly for SMP configurations. SMP, or Symmetrical MultiProcessing,is one of several design approaches that allows more than one CPU toshare computing load, divvying up responsibilities among theprocessors. “Two-way,” two-CPU computer configurations aretypical for desktops, which means that one CPU can handle all of theUI, networking and other mundane tasks, while the second CPUconcentrates solely on your media application’s needs.

A third, though indirect, advantage is that 64 bits facilitate morewidespread double-precision data handling, which, in turn, means betterquality for your data “product.” For many media moguls,quality appears to be one of the last things on their minds, but formyself and one or two other engineers out there, delivering a realisticacoustic performance to the consumer is an important consideration, anddouble-precision processing really helps.

To be realistic, though, 64-bit processors won’t buy us squat untilsoftware vendors also drink the 64-bit Kool-Aid. Unless your favoriteapplication is rewritten or, at the very least, recompiled to takeadvantage of these next-gen processors, then you won’t see anyimprovements. Even worse, under some circumstances, you may actuallyexperience crappier overall performance due to your 32-bit applicationrunning in “compatibility” mode, essentially emulation, ona 64-bit Itanium. Because both the Opteron and PowerPC families weredesigned with transparent, low-level compatibility for legacy or 32-bitapplications, they’ll run your old-school stuff just fine, thank youvery much.

Another way of looking at all of this 64-bit hoo-ha is that RISC andCSIC architectures, once clear and polar opposites, are now both movingtoward a common ground. Distinctions are increasingly blurry, though;it may take a few years before the likes of Digi get around torewriting its stuff for G5s, Opterons and Itaniums. In the meantime,more agile and customer-oriented concerns will get right on the stick,providing 64-bit-optimized versions of your favorite software. So, saveyour Euros for that inevitable upgrade, because longer really isbetter!

OMas has recently taken many an audio geek across the Divide ofConfusion to the blissful land of OS X Understanding. This column wasbrewed while under the influence of Madredeus’ Electronico and,in keeping with the electronica slant, Björk’s GreatestHits.

VIRTUAL MEMORY

Virtual Memory (VM) is a standard method of using slow hard diskspace to act as a substitute for fast solid-state memory, typicallyRandom Access Memory, or RAM. Both Mac OS and Windows use virtualmemory to optimize RAM usage. In Ye Olde Days, hard disks were far lessexpensive than RAM, so VM was a viable option for cash-poor, time-richfolks who couldn’t afford a boatload of RAM. You’d have to betime-rich, because the time it takes to read and write data to rotatingmedia like a hard disk is orders of magnitude slower than RAM accesstimes.

When an operating system decides that memory requirements aregetting tight, it takes the oldest data from RAM and“pages” it out to disk until needed again. If the data islater required to complete some operation, then it’s read from diskback into RAM and then used. This “swapping” of data to andfrom RAM and disk takes — to a CPU operating at several GHz— what appears to be an inordinately large amount of time. Hence,the slowdown associated with the use of VM. Moral of this story: Themore RAM you have, the less swapping that happens and the faster yourcomputer will be.

— OMas