When it comes to sheer power, today’s DAW-based studios blow away their analog ancestors. Features like unlimited track counts, pitch-and-time manipulation, plug-in effects, virtual instruments, amp modeling, automation, total recall and non-destructive editing provide musicians, engineers and producers with capabilities and options that weren’t even dreamed of in the analog era. But recording into a computer presents one very large drawback that was never a problem back then: latency delay.

On-Time Performance

Latency has a number of causes, which we’ll cover in this article, but when you boil it down, it’s an issue of timing. Whether you’re recording a DI guitar or bass, miking a voice or instrument, or playing a virtual synth, there is a delay between when a note is played or sung, and when it’s heard through the monitoring system.

The delay is caused because it takes a tiny fraction of a second for the signal to go through the analog-to-digital conversion in the interface, into the DAW’s buffers for processing, then back to the interface and through the digital-to-analog converter. Musicians and singers are used to hearing the notes in real time when they play their instruments or sing, so even a slight delay is disconcerting, and can make it impossible to keep time.

A latency delay of 12 to 15 milliseconds or higher will distract and cause difficulty for most musicians and singers. Even 6 to 11 ms can throw off the “feel” without necessarily being perceived as an actual delay. For a little context, when a drummer hits a snare drum, the time it takes for that sound to reach his or her ears is 2.1 ms. The time it takes you to blink your eyes is typically between 300 and 400 ms.

Interestingly, not everyone is affected to the same degree by latency. Some musicians can still function when the delay is relatively slight, whereas others will find it simply too distracting. It’s a safe assumption that vocalists are most thrown by latency, because they’re used to hearing their voices in their heads when they sing—an experience that’s even more immediate than listening to an instrument through the air.

Take a Trip

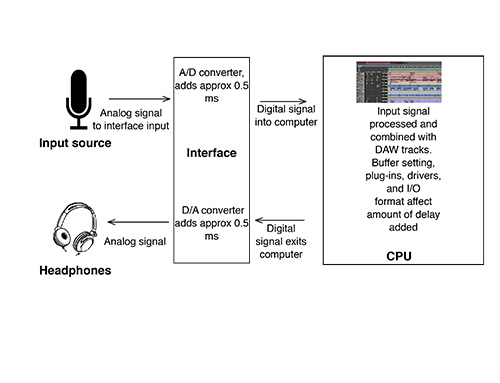

The term “round-trip latency” refers to the time it takes for audio to pass from the audio interface into the computer and back to the interface for monitoring. A number of components affect the amount of delay along the way.

First, the signal must pass through the analog-to-digital converter in the interface, which typically takes about half a millisecond. Once digitized, it travels into the computer and the DAW, gets processed with whatever plug-ins are being used (which add even more delay), and then comes out of the DAW and back to the interface (see Fig. 1). Next, it goes through the digital-to-analog converter, adding another 0.5 milliseconds or so.

The time it takes for audio to be processed once inside a DAW is determined by the size of the buffers. These are temporary RAM storage areas that exist on both the input and output side. As audio passes through, it’s held briefly in the buffers, allowing the computer to process it more smoothly. The downside is that it adds additional delay. You have some control over this via the “I/O buffer size,” a setting that’s usually found in your DAW’s audio preferences.

Buffer sizes typically go incrementally from 32 to 2440 samples, or even higher (each successive setting is double the previous: 32, 64, 128 etc.). Larger buffers create more latency and smaller settings create less. So why not always use the smallest buffer possible? The reason is that smaller settings give the CPU less time to handle the necessary processing, forcing it to work harder to keep up. The result can be audio with distortion or pops and clicks. It’s often necessary to set a buffer size that’s higher than the lowest setting, in order to get clean audio. Older, slower computers are more susceptible to glitches at low buffer settings.

In addition, the more tracks and plug-ins there are on a session, the greater the strain on the CPU, and the lower the likelihood of getting away with low buffer settings.

Another factor that can influence the amount of latency is the efficiency of the I/O technology being used, such as USB2 or USB3, Firewire 400 or 800, or Thunderbolt. Thunderbolt, which is used on Focusrite’s Clarett series of interfaces (see Fig. 2), is the fastest, followed by USB3, FireWire 800, FireWire 400 and USB2.

Fig. 2: Focusrite’s Clarett series of interface feature Thunderbolt connectivity, which allows for the fastest connection and lowest latency.

Many interfaces require a software driver to be installed in order for them to work. The quality of the driver can also impact the amount of latency, which is another reason the choice of an interface is consequential.

Half Measures

Audio interface manufacturers have attempted to combat latency with a variety of strategies. Direct hardware monitoring is one of the most common. It splits off a copy of the input signal and routes it directly to the headphone or speaker output. That way, the input source is heard with virtually no latency. The problem is that it’s being heard completely dry, without any of the processing that would otherwise have been in the cue mix.

Some interface manufacturers have addressed that problem by including a DSP chip, allowing the user to monitor, or even print, with effects like reverb, delay and compression. These interfaces generally come with software mixers, which are separate applications that allow the user to control routing, cue mixes and cue-mix effects.

If you record through a mixing console, you can set up your own direct monitoring, regardless of your interface’s capabilities, by including the direct sound from the input channel in your cue mix.

There’s one major limitation to direct monitoring, however: It will be little or no help when recording parts that depend on sounds created in the box. In that case, the talent will still have to monitor from after the signal makes its round trip through the computer and back into the interface.

For instance, when recording a DI guitar part through an amp simulator, direct hardware monitoring won’t allow the guitarist to hear the simulated amp tone while playing, only the clean direct sound. Yes, the amp sim can be added later during the mix, but for many types of guitar parts, especially distorted ones, the touch and feel is heavily dependent on the sound and the amount of sustain. In such a case, playing it clean isn’t an option, and the guitarist will have no choice but to deal with whatever the system’s latency is while recording the part.

It can also be problematic when recording with a MIDI virtual instrument. That instrument’s entire sound is created inside a DAW plug-in, so the player doesn’t have the option of hearing a clean DI sound and adding the sound later, like is the case with amp simulators.

Another way in which interface manufacturers have tried to circumvent the problems caused by latency is by using a “mix” knob, which adjusts the ratio of direct-monitored input signal and round-trip, processed signal in the monitor mix. The theory is that a happy medium can be found, with enough processed signal to satisfy sonic needs and enough direct signal to keep time.

In practice, this solution is often unsatisfying. The input signal either sounds like it has a delay on it, or, if the mix knob is set too far to the “direct” side, it’s not much different from the dry, effect-less sound of basic direct monitoring. What’s more, it doesn’t help at all when using a virtual instrument, since there’s no direct sound to blend in.

The Way Forward

None of the solutions described in the previous section are completely satisfying for the user, and none provide the seamless, “what you play is what you get,” latency-free recording experience.

The advent of Thunderbolt I/O technology, which can achieve much faster data-transfer rates and wider bandwidth than previous formats, provides the framework for a solution that essentially eliminates noticeable latency. Unlike FireWire or USB, Thunderbolt allows for connection of a compatible audio device to a computer’s PCI Express interface, which is essentially a super-fast internal bus.

Focusrite has harnessed this technology in its Clarett series of Thunderbolt audio interfaces. Models include the 2Pre (10-in, 4-out), the 4Pre (18-in, 8-out), the 8Pre (18-in, 20-out) and the 8PreX (26-in, 28-out), which offer round trip latency that’s so low that it eliminates the need for direct monitoring, mix controls or any kind of workaround—it’s just plug and play. Because of the capacity and speed of Thunderbolt and the efficiency of Focusrite’s drivers, latency is so low that it’s virtually unnoticeable, even when effects from DAW plug-ins are added to the cue mix (see Fig. 3).

Fig. 3: This latency chart shows how low latency can be on various DAWs, when using Focusrite Clarett interfaces. Notice that all these figures are below the level at which latency becomes noticeable.

The performance varies a bit from DAW to DAW, but in both Logic Pro X and Cubase Pro 8, it’s possible to get a stunningly low round-trip latency of 1.67 ms on a Mac Pro, with the buffer set at 32 samples. Even with the buffer at 128, those numbers only go up to 2.34 ms. Even the highest numbers from this test, 7.93 ms on Pro Tools 11 with a buffer of 128, is well below the level at which latency generally becomes problematic.

Whether you’re a self-recording musician, a producer or an engineer, a Clarett-equipped studio will free you from the problems caused by latency, and allow you to focus on creating great music, which is the way it should be.