When I first played games such as Pac Man and Asteroids in the early ’80s, I was fascinated. While others saw a cute, beeping box, I saw something to be torn open and explored. How could these games create sounds I’d never heard before? Back then, it was transistors, followed by simple, solid-state sound generators programmed with individual memory registers, machine code and dumb terminals. Now, things are more complex. We’re no longer at the mercy of 8-bit, or handing a sound to a programmer, and saying, “Put it in.” Today, game audio engineers have just as much power to create an exciting soundscape as anyone at Skywalker Ranch. (Well, okay, maybe not Randy Thom, but close, right?)

But just as a single-channel strip on a Neve or SSL once baffled me, sound-bank manipulation can baffle your average recording engineer. I’d like to help demystify this technology, starting by explaining what middleware game audio engines are because they are the key to understanding what makes game audio different.

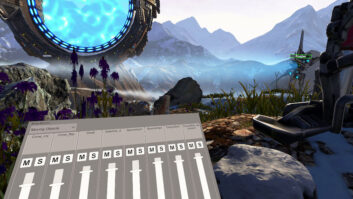

In games, once sound is captured, there’s a whole other level to explore: integration. That’s where middleware comes in. Middleware is software that connects game developers with the hardware (Xbox 360, PS3, PC, etc.) they use in development. Just as Pro Tools lets you generate sound from a computer, middleware lets users link sounds to game objects, such as animations (firing a gun, running), scripted events (a column falling across a road, a ship going to lightspeed) or areas (inside a church, at the foot of a cliff). Where a programmer was once required for all this integration, this is no longer the case.

GAMECODA

From Creative Labs’ Sensaura division comes GameCODA (www.gamecoda.com) for the Xbox, PS2, PC and Gamecube platforms. It supports audio in WAV, AIFF, VAG, ADPCM, Xbox ADPCM and Ogg Vorbis formats, from mono through 5.1 surround, and the code is low-level, with run-time and APIs. Sounds are automatically compressed to console format.

Within GameCODA is CAGE producer, a sophisticated bank-management tool that’s cross platform-compatible, and its tab-type switching between consoles is an excellent feature. While the engine is fully compatible for hookup with Renderware, Alchemy, Gamebryo, Karma, Fonix and Havok middlewares, it doesn’t have direct integration with Unreal 3. CAGE plug-ins provide control of 3-D sounds directly in 3DS Max and Maya. However, most game audio engineers aren’t familiar with that environment so it is not that conducive to place sounds using those tools.

If your programmers know how to use Renderware, then you can hear real-time parameter changes using GameCODA. The same is true of Renderware native audio tools. One caveat: Criterion is now owned by Electronic Arts. The Renderware site was last updated in 2005, and many developers are scrambling to Unreal 3 due to uncertainty of Renderware’s future. Pity, it’s a pretty good engine.

Streaming is supported, though it is not revealed how it is supported on next-gen consoles. What is nice is you can specify whether you want a sound streamed or not within CAGE Producer. GameCODA also provides the ability to create ducking/mixing groups within CAGE. In code, this can also be taken advantage of using virtual voice channels.

Other than SoundMAX (an older audio engine by Analog Devices and Staccato), GameCODA was the first audio engine I’ve seen that uses matrix technology to achieve impressive car engine effects. Imagine being able to crossfade samples across a grid to achieve multiloop, seamless transitioning during shifting and RPM change. It’s that cool.

Alas, there’s no way to link directly to game events without a programmer’s help. Unlike DirectMusic, there’s no VisualBasic scripting equivalent, and unlike RenderwareAudio, there’s no message system. However, it is possible to link messages in Renderware to samples in GameCODA directly if you’re using that approach. Still, it won’t just “work” out of the box, which is what we all have been waiting for. Interactive music support is coming soon. According to company announcements, GameCODA will link seamlessly with Creative’s ISACT (Interactive Spatialized Audio Composition Technology).

On the upside, GameCODA is one of the first really hard-hitting audio middleware products of its kind, and where most thought it was dead, it is still possible to license it. It features extensive integration functionality using 3DS Max and Maya, matrices, timeline editing and multiplatform seamless production. Support is provided within a 24-hour response time. GameCODA is also less expensive than some other engines. However, how is next-gen supported? That isn’t yet revealed, although it is hinted at in FAQs and press releases. In addition, a number of developments on the horizon could make this engine even better, such as direct ISACT support and other plug-ins for speech recognition. Linking closely with Renderware and not Unreal 3 is also a problem; Unreal 3 is the Number One middleware these days.

ISACT

Also from Creative Labs, ISACT (http://developer.creative.com) supports the PC, Xbox and Xbox 360 platforms, and is free if a PC hardware output layer is used. The program supports WAV, AIFF, CDDA (import), PCM, ADPCM, WMA, XMA and Ogg Vorbis (export) sound formats, as well as any configuration of surround audio. Sounds are automatically compressed to console format using the Target Platform Settings tool.

ISACT Production Studio (IPS) is essentially a multitrack editing environment. However, the “tracks” are far more varied than audio or MIDI. IPS gives you control of a completely new suite of objects specifically oriented to gameplay situations such as Sound Randomizers, Sound Events and Sound Entities. Don’t get scared; it’s a whole new ballgame, a whole new playground.

Using the IPS function Realtime Parameter Controls and the run-time component with a network connection to your target platform, you will have real-time control during game play of all ISACT functions.

Although it’s somewhat convoluted, it is possible to use Sound Entities and Groups to create a ducking behavior. I’ve found the best way to do this is to assign each sound or sound object in your hierarchy to a group that you define. Then in a matrix (think Excel document), set priorities (one group ducks a set of another’s or an individual), volume and duck time. ISACT makes it necessary to create variables within objects and, well, without going into too much detail, it isn’t as simple as my method.

Although there aren’t any sound matrices lying around for you to use, once again, the Sound Entity is your friend! Create parameters such as RPM, shift, gear and such, and assign them to pitch and crossfades using RPC (Realtime Parameter Control). Again, not quite as fast or intuitive as other methods (such as GameCODA’s), but a Sound Entity is a much more open-ended tool.

As with GameCODA, you can specify whether you want a sound streamed. The plus here is that you can specify preloaded sounds (this means the first chunk of a streamed sound is loaded to avoid the disk-loading latency associated with standard streaming sounds). It’s extremely useful for quick, load-required streamed sounds such as voice-over.

ISACT can load a CAGE Producer file for interactive music. ISACT was originally designed to be an interactive music system, and as such, you can create tracks containing music objects with individual volume and spacialization (as with most multitrackers). ISACT also allows you to randomize these, transition them with controllable crossfades linked to events and much more.

Here again, the Sound Entity lets users create parameters that will link to game events. Unfortunately, as with GameCODA, you can’t just look up events in a list in your game world editor and then type them into IPS and have them work.

On a happy note, ISACT won a Frontline Award two years ago for a reason: It was the first tool that used a track layout to associate it more closely with traditional DAWs for game audio integration. At this point, it has a huge amount of open-ended, great features that put it in a category all its own. The fact that it is free and allows you to create your own kind of sound behaviors is more than worth the learning curve. And ISACT’s near-instant e-mail response to support is excellent. However, it’s a bit challenging to get your head around the concepts of Sound Entities, and at this time, there are no plans to go Wii or PS3. At a company like mine, that creates quite a few limitations.

MORE TO COME

So far, I’ve looked at the first two of the bunch. In future segments, I’ll delve into Wwise, FMOD, Unreal 3 and Miles.

Alexander Brandon is the audio director for Midway Home Entertainment in San Diego, Calif.