Thirty-five years after its adoption, MIDI is on the move again. We already covered the significance of MPE (MIDI Polyphonic Expression) in May 2016, and now the MIDI-CI (MIDI Capability Inquiry) specification—the latest in what appears to be the opening salvo in an additional series of MIDI enhancements—has been ratified by the MMA (MIDI Manufacturers Association).

The main challenge that MIDI-CI solves is the inability of pre-MIDI-CI gear to recognize the functionality of other gear. For example, if you hooked up a hardware control surface with 16 faders to a synth, the control surface wouldn’t know what it was controlling, and the synth wouldn’t know it was being controlled. There were two solutions. The first was hoping that the gear included templates written to accommodate other equipment. The Mackie protocol is a good example of this. If a controller is Mackie control-compatible, and the device being controlled understands the Mackie control spec, then the two can talk to each other somewhat—after you’ve made introductions, for example, by loading a template or changing a preference. The other option was to map each control to a parameter, which could be a laborious process. I use a Peavey PC-1600 hardware fader box to control software mixer faders in live performance, and neither is aware of the other’s existence. This requires programming the hardware faders to control the software faders.

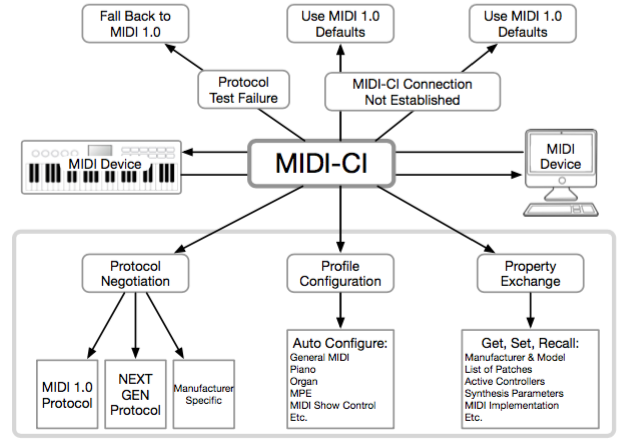

To solve this problem, a device that meets the MIDI-CI spec will be able to recognize functionality of other MIDI equipment, and configure itself accordingly simply by being hooked up—but the concept goes deeper. MIDI inquiries also make it easier to adopt next-generation protocols because the spec includes a fallback option for older gear, so nothing is left out in the cold as the technology advances. Not to embarrass other industries, but once again the MMA and AMEI (the Japanese-based Association of Musical Electronics Industries that maintains the MIDI spec for Japan) have shown it’s possible to work together to benefit their customers, not just themselves.

There are three main elements to MIDI-CI: Profile Configuration, Property Exchange and Protocol Negotiation. These will develop over time as new gear is introduced, but older devices may also be retrofittable. Profiles are the rules themselves for how MIDI equipment communicates. For example, the architecture for analog synths (virtual or hardware) is fairly mature. A hardware controller could recognize that it’s controlling an analog synth and automatically map its controls to the synth. Furthermore, if you used a different virtual analog synth, the control mapping would be the same—you wouldn’t need to remap controls or learn a new control configuration. It’s much like MIDI Learn, but automatic and more comprehensive.

One of the main values of Property Exchange (PE) messages is bridging the hardware and software worlds. A DAW’s project file could store device setups for external hardware so that controller names, patch names, metadata and other properties would be at your fingertips within the DAW. Essentially, this makes hardware appear like a plug-in within a DAW; merely connecting a hardware synthesizer would be conceptually the same as inserting a software plug-in. This was demonstrated at the MMA’s annual NAMM meeting, where Cubase did a “total recall” of settings from Yamaha, Roland and Korg hardware synthesizers. Although we always had sys ex to save and load data, being able to access parameters seamlessly from within a DAW is welcome.

Protocol Negotiation is the most forward-looking aspect of MIDI-CI. Both the MMA and AMEI are working on a next-generation protocol that has the potential for higher resolution, additional expressiveness, a greater number of channels and more—essentially, the wish list that many have expressed for a “MIDI 2.0.” MIDI gear can determine whether other MIDI gear can take advantage of new features, but if not, devices that lack these capabilities will simply continue to use MIDI 1.0. For example, a new controller with dramatically increased expressiveness would be able to use sound generators that take advantage of the increased expressive capabilities, but continue to work at the existing MIDI 1.0 level with older synthesizers.

MIDI-CI opens the door for transitioning from MIDI as we’ve known it to a 21st-century protocol. But perhaps the most important aspect is everything that works today is intended to work in the future, and changes can roll out over time to ensure a smooth transition. If only the world of computers worked this way. I can dream, can’t I? (Note: For updates on MIDI-CI and other MIDI enhancements, go to www.midi.org.)

Author/musician Craig Anderton updates craiganderton.com every Friday with news and tips. His latest album, Simplicity, is now available on Spotify and cdbaby.