For a long time there were rumors about Apple wanting to eventually merge iOS and Mac. There have been some developments in that direction, including an Apple announcement that Macs will soon allow iOS developers to port apps to the Mac platform. However, that seems to be a decision that’s aimed mainly at expanding the economic potential of the Mac platform, and isn’t a precursor to the melding of Mac and iOS. Indeed, Apple also recently announced that it has no plans to merge the two platforms. And to that, I say something I rarely say anymore—but used to all the time—“Thanks, Apple!”

Back when the iPhone first came out and music apps started appearing, I was actually quite enthused about the idea of music produced with a mobile device. I downloaded all manner of music apps and followed the various developments such as the introductions of Audiobus and Inter-App Audio. I wrote articles about mobile music production and enjoyed messing around with synth apps like Jordan Rudess’s MorphWhiz and Korg’s various remakes of its classic synths.

Read more Mix Blog Studio: Conjuring Up a Dead Session.

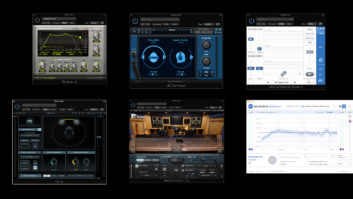

I experimented with recording on my iPad or iPhone into GarageBand and was thrilled when the Cubasis app, an actual professional-level DAW, was released on iOS. I also loved the way you could use apps from different developers in tandem, and I generally thought the whole iOS music thing was going to be just ducky.

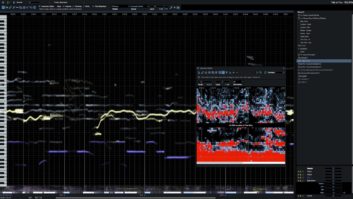

But after a while, the novelty wore off, and I realized that as mobile studio setups go, my MacBook Pro was leagues beyond my iPad in terms of power, capabilities and storage. I also concluded, after messing around with iOS multitracks, that the whole touchscreen paradigm wasn’t so swift for controlling a DAW, especially on the small screens of mobile devices. The numerous knobs, sliders, buttons and faders of a DAW GUI are difficult enough to navigate with a laptop’s touchpad or a mouse aiming a cursor, but a lot trickier when you’re trying to point at tiny areas of the screen with that big clunky controller known as your finger.

I do like touchscreen technology, and I think it can be beneficial as long as the screen is really big (for example, Slate Digital’s Raven console). Nevertheless, I don’t view the mouse-and-keyboard method as being old-school. I think it’s better in some ways (it’s much more precise), worse in others (it’s one step removed), but for navigating a crowded screen like a DAW mixer, I’ll go with the precision of a mouse any day.

The area of live sound is where iOS music software has become the most useful for actual professional work. Live mixer-control apps, which allow sound engineers to walk around a venue, particularly at soundcheck, making adjustments while untethered from the FOH position, provide a real benefit. That’s a circumstance where the portability of an iPad, and the ability to hold it with one hand while controlling it with the other (which is difficult at best on a laptop) is more consequential than the problems of manipulating a crowded touchscreen.

If you’re a live performer, an iPad is a revolutionary device that lets you carry around a virtually unlimited collection of written music and lyrics, and these days you can even use a Bluetooth footpedal to remotely change pages. For rehearsals, I love having a tuner, metronome and an audio recorder handy at all times, in my phone.

But when it comes to music production, I’m sticking with my laptop. Would it be cool to be able to do remote multitrack sessions on my mobile device as easily as I can from my MacBook Pro? Sure. But until the former proves itself equal or better to the latter, I’m not switching. I’m glad that Apple has, at least for now, put the brakes on merging iOS and Mac OS.