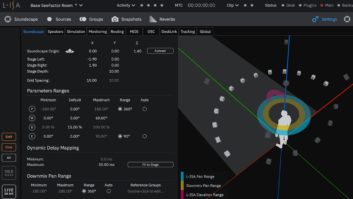

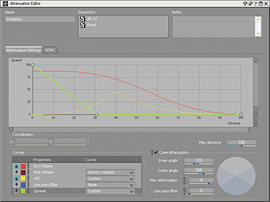

Wwise 2007.1 now features independent attenuation of several volume properties as part of the redesigned 3-D positioning system.

Audiokinetic first introduced the Wwise 2006.1 audio authoring tool for videogames at the Game Developers Conference in March 2006. (See “Technology Spotlight” in October 2006 Mix.) It was an off-the-shelf solution designed to help game developers implement interactive audio in an environment that would feel comfortable to an audio pro.

Since then, Wwise earned the prestigious Game Developer magazine’s Frontline Award and continues to attract the game industry’s attention: The company signed a long-term agreement with Microsoft Game Studios to use Wwise on such newly released games as Shadowrun, developed by FASA Studio. I test-drove the recent 2007.1 update, which introduces an interactive music tool set, coupled with a redesigned 3-D audio spatialization system.

INTERACTIVE MUSIC PRIMER

Interactive music can mean many things to many different people. In videogames, getting music to shift dynamically with constant changes in game states (as dictated by the player’s actions) is a formidable challenge. Imagine the dizzying array of transitions between different cues. If you look at the way games usually implement their interactive music, the extent of music transition from one cue to the next is dependent on the game and its style of play. Sure, there have been several games with very good interactive music implementation, but most used proprietary tools to achieve it. Middleware providers, such as Audiokinetic, are beginning to introduce tools that make sense.

THE TEST DRIVE

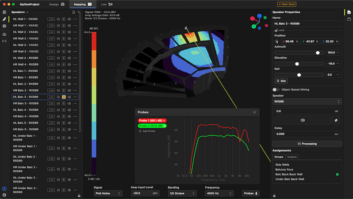

The Interactive Music Layout in Wwise 2007.1 features the powerful Music Segment Editor.

Game developers can demo Wwise 2007.1 — with the SDK (which allows integrating Wwise into game engines) and application — by submitting a form on Audiokinetic’s Website. Audiokinetic is only available as a licensed solution; a license fee must be negotiated with Audiokinetic. Wwise includes Cube, a first-person shooter game (with Doom 2-era graphics), with the Wwise SDK pre-integrated into the Cube engine, which is what I used to test Wwise. Once you download Wwise, pay attention to the install instructions as there are a lot of things working together to make the magic happen (which, with all of the support files, takes up roughly less than 300 MB). I found the documentation to be extremely well-written; it even includes a comprehensive example project and a highly polished set of tutorials that allowed me to get up and running quickly and smoothly. For content providers, students and educational institutions, Audiokinetic provides a limited licensing agreement with online support and access to help forums.

The big news with V. 2007.1 is that Wwise now employs a sample-accurate interactive music engine, enabling composers to tie music objects to game event/triggers to create smooth musical transitions as changes in game states occur. With the new Interactive Music Hierarchy, I was able to easily manage music objects by placing and defining object properties/behaviors on an individual or group basis. These new objects include Music Segment(s) — which correspond to actual WAV files of music — and Music Switch(es) and Music Playlist(s), which are containers designed to handle either switching music segments or playing music segments in a particular order, as their names suggest. The user interface is the same as in previous versions, although I must say that I am not a fan of Audiokinetic’s custom interface, which limits window resizing and multiple monitor support.

FEELIN’ THE RHYTHM

To facilitate sync and transition points for my music files, each music segment must be told its tempo and meter based on how I authored each file outside of Wwise. Along with tempo and time signature, I used the grid settings to specify how each music segment is to be virtually partitioned. By adding another level of granularity to the music segment, I had a great deal of flexibility by determining sync points for music transitions, state changes and stingers. Music Switch containers allowed me to group pieces of music according to the different alternatives that exist for particular elements within a game. For example, the container might have switches for fight sequences, stressful situations and character stealth mode.

Each switch/state contains the music objects related to that particular alternative. In this example, all of a game’s music segments related to fight sequences would be grouped into the Fight switch, all of the music segments related to stressful situations would be grouped into the “Stress” switch and so on. When the game calls the Switch container, Wwise verifies which switch or state is currently active to determine which container or music segment to play. With the Music Editor window, I was able to control how a music segment behaved and in what context the segment should behave. With Wwise, I layered multiple segments on top of each other, allowing me to create more textures musically. I was also able to dynamically introduce layers (tracks) of music segments based on game events. With this, I got killer music interactivity for games. To add more interactivity to your music, you can play stingers at key points in the game action.

One of the coolest interactive music features in Wwise is the ability to use rules known as Transitions, which can be used to help bridge the gap between two music segments that sound strange when switched. Think of this as a custom fix that can be written by the composer to help resolve the segue between two segments. When a transition is required, Wwise scans the Transition Matrix from bottom to top to find a transition rule that matches the situation. It will stop as soon as it finds a transition that fits, whether or not this is the best transition to apply. To apply transitions optimally, I defined rules in the Transition Matrix in descending order, from general to specific.

SPATIALIZATION, CAR SIMULATOR

With the newly redesigned 3-D Spatialization system, spatialization and attenuation are now independent. I controlled the attenuation of dry volume, wet volume, LFE and lowpass filter independently. Using the Spread functionality, I was able to gradually control whether a sound emitted as a point source or as a completely diffused sound, changing over distance and essentially acting as interactively controlled divergence.

In addition, for sound designers working on games with vehicles, one handy tool is the Car Engine Simulator (known as CarSim), a small app that simulates vehicle physics tied to real-time parameter changes (RTPCs), which can correspond to a game’s throttle, rpm, etc., values. This app will be indispensable to sound designers who want to ensure that the different rpms that were recorded are properly crossfading (using a Blend container) and to find any anomalies as to how the overall sound of the car engine is performing. However, this is only an offline tool: The only way to be certain if the car/vehicle engine will work properly is to operate the vehicle in the game, ensuring that the engine sound is performing as expected.

NEXT-GEN INTERACTIVE AUDIO TOOL

Overall, I found the interactive music toolset within Wwise to be extremely powerful, and I see this as a great first step in addressing a formidable challenge to both videogame developers and composers. I say first step because I wish that the interactive music toolset/engine supported time stretching, allowing more automated music cue synching, such as that found in Ableton Live or Sony Acid. In addition, I would like to see more pro audio-quality algorithms (such as better reverbs, filters, etc.) featured in audio middleware packages.

However, I would highly recommend Wwise for any interactive audio creator or music composer looking for a commercially available interactive system that is built to bridge the gap between technology and audio/music authoring for videogames.

Audiokinetic, 514/499-9100, www.audiokinetic.com.

Michel Henein is a freelance sound designer and co-owner of Diesel Games in Arizona.